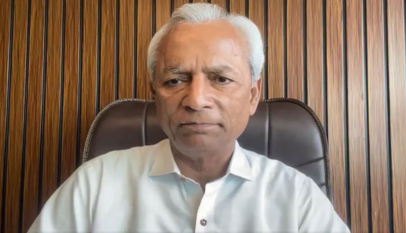

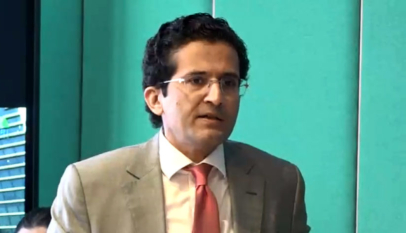

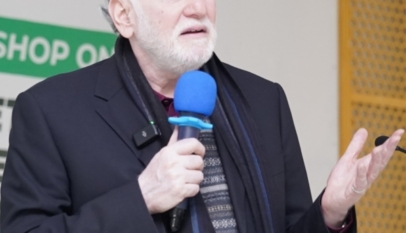

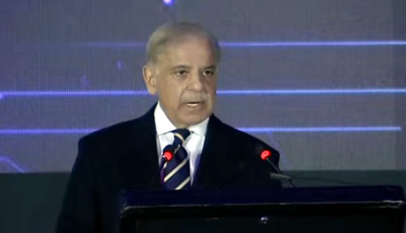

An AI application that can convert still images of people and audio tracks into animation has been developed by a team of AI researchers at Microsoft Research Asia.

The people in the images are accurately shown speaking or singing to the audio tracks, along with apt facial expressions.

This Microsoft application known as VASA-1, is a structure for generating real talking faces of virtual characters along with appealing visual affective skills (VAS) from a single static image and a speech audio clip.

“Our premiere model, VASA-1, is capable of not only producing lip movements that are exquisitely synchronised with the audio but also capturing a large spectrum of facial nuances and natural head motions that contribute to the perception of authenticity and liveliness,” wrote researchers in a paper describing the framework.

How does Microsoft’s VASA-1 work?

The core design includes holistic facial dynamics and a head movement generation model that works in a face latent space and the development of an expressive disentangled face latent space using videos, according to the team.

“Our method not only delivers high video quality with realistic facial and head dynamics but also supports the online generation of 512×512 videos at up to 40 FPS with negligible starting latency. It paves the way for real-time engagements with lifelike avatars that emulate human conversational behaviours,” the researchers wrote.